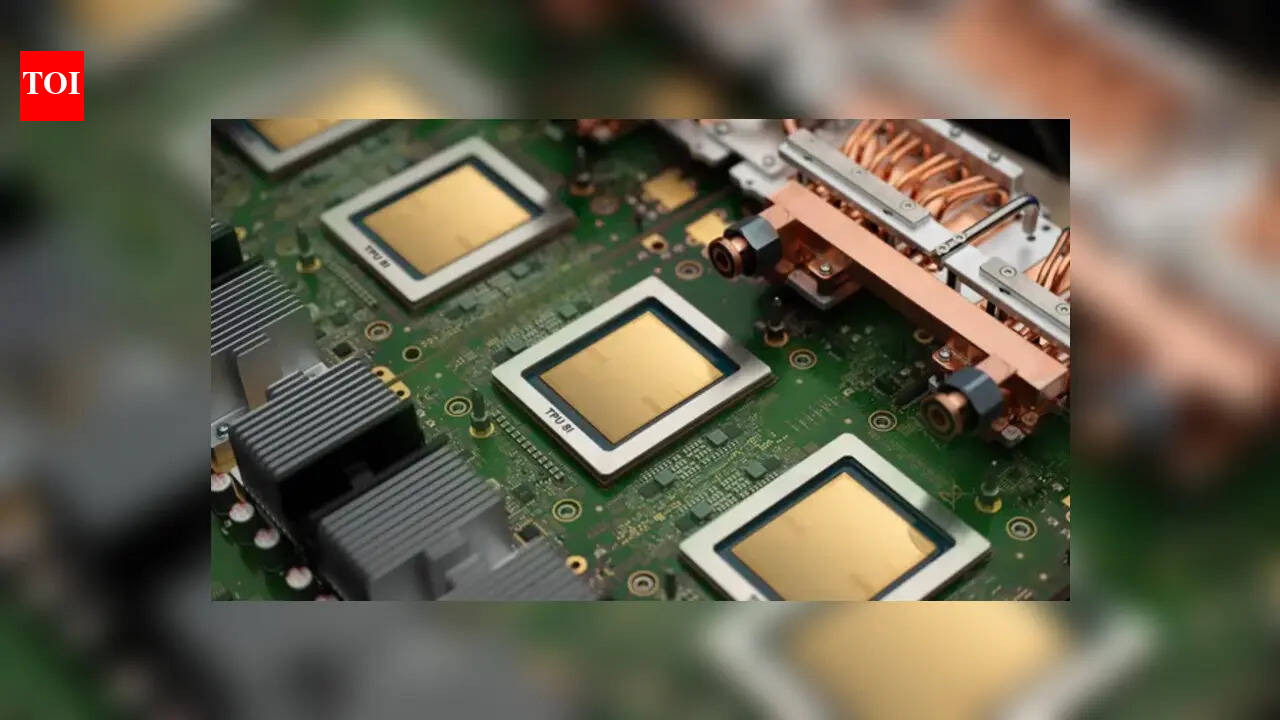

Google has unveiled its latest Tensor Processing Units – the TPU 8i and TPU 8t – designed specifically for the “Agentic Era”, at Google Cloud Next 2026. By optimising these chips for autonomous AI agents that perform complex tasks on a user’s behalf, Google is sending a clear message to Nvidia: it is challenging their silicon supremacy. Essentially, Google’s edge does not lie in specialised chips but in a fully integrated AI stack fine-tuned for the demands of agentic AI.AI agents need to reason, plan and execute multi-step workflows, and Google TPU 8i is designed specifically to enable AI agents to complete this very quickly to provide a good user experience. Complementing TPU 8i is TPU 8t is optimised for training and can run even the most complex models on a single, massive pool of memory.“Along with our full-stack purpose-built infrastructure, which is from networking to data centers and energy-efficient operations, they create the underlying engine that will allow us to bring highly responsive agentic AI to the masses,” the company said.

What are TPU 8t and TPU 8i, and how Google is challenging Nvidia

As AI evolves, Google is moving away from ‘one-size-fits-all’ hardware. Instead, it has designed two distinct chips to handle the two different sides of AI work.TPU 8t is a training chip wich which is optimised for training the world’s most complex AI models. According to Google, it can scale up to 9,600 chips working together as one giant super-machine, offers 3x the processing power of previous generations while being twice as energy-efficient, and the chip is designed to build the “brains” of AI faster than ever before.TPU 8i is for inference, which is the process of an AI actually running and responding to users. Google claims that the new chip delivers 80% better performance per dollar, meaning companies can run millions of AI agents at the same time without breaking the bank. It is designed for speed, ensuring AI agents “think” and “react” fast enough to feel like a real-time assistant.While Nvidia’s GPUs currently remain the gold standard for AI, but Google believes it has an advantage. Unlike Nvidia, which only makes the chips, Google builds everything. Google designs the chips, writes the AI models (like Gemini 3), and owns the massive data centers where they run.This “full-stack” approach allows Google’s hardware and software teams to trade secrets, fine-tuning the chips to run Google’s specific AI code perfectly.“It now becomes sensible to specialize chips more for training or more for inference,” said Google Chief Scientist Jeff Dean in a recent interview. By splitting the workload, Google hopes to slash the response times that currently make chatbots feel slow.

Big names are already signing up for Google TPUs

The market is already shifting. While Nvidia recently licensed technology from Groq to speed up its own chips, Google is already landing massive contracts:Meta (Facebook/Instagram): The social media giant has signed a multi-billion dollar deal to use Google’s TPUs over several years. “It does look like there might be inference advantages,” said Santosh Janardhan, Meta’s head of infrastructure, while noting that “no new platform is without hurdles and a learning curve.”Anthropic: One of the most famous AI developers has secured access to as many as 1 million TPUs to build its next-gen models.What’s noteworthy is that Google is not cutting off Nvidia entirely. Recognising that customers want choices, Google Cloud will continue to offer a variety of options, including its own Axion CPUs, the new TPUs, and even the latest Nvidia GPUs.However, with the launch of the 8-series, Google has made its intentions clear: when it comes to the future of AI agents, they want to be the ones providing the foundation to the industry.